PR / News

J Bonilla - Will Robots Kill the Music Pros?

12 October 2020

J Bonilla

Co-Founder/Creative Director, The Elements Music

The Elements has a roster of hundreds of the best independent artists and composers from around the globe, a robust production team in their Los Angeles and London offices, and a body of work that includes brands such as Nike, Samsung, BMW, Google, NBA, Porsche, Dyson, and Uber to name a few - as well as working directly with leading TV networks, video game companies, and production companies.

“It’s unreal that I get to wake up every day and create music with insanely talented artists and composers, work with teammates that I love and respect, and continue to build a company that I’m wildly passionate about!”

Will Robots Kill the Music Pros?

My company makes original music for media and the robots are coming for us. I see them approaching in the distance, the Ampers and Jukedecks of the world, with their Artificial Intelligence-generated tracks. One of them probably just updated a line of code in their algorithm that sped up our obsolescence by five years. These are the menacing thoughts that wash over me at times. Then I usually pivot to cocky defiance. There’s just no way AI will ever be able to hear the subtle nuances between guitar tones or snare drum samples like I can, right?

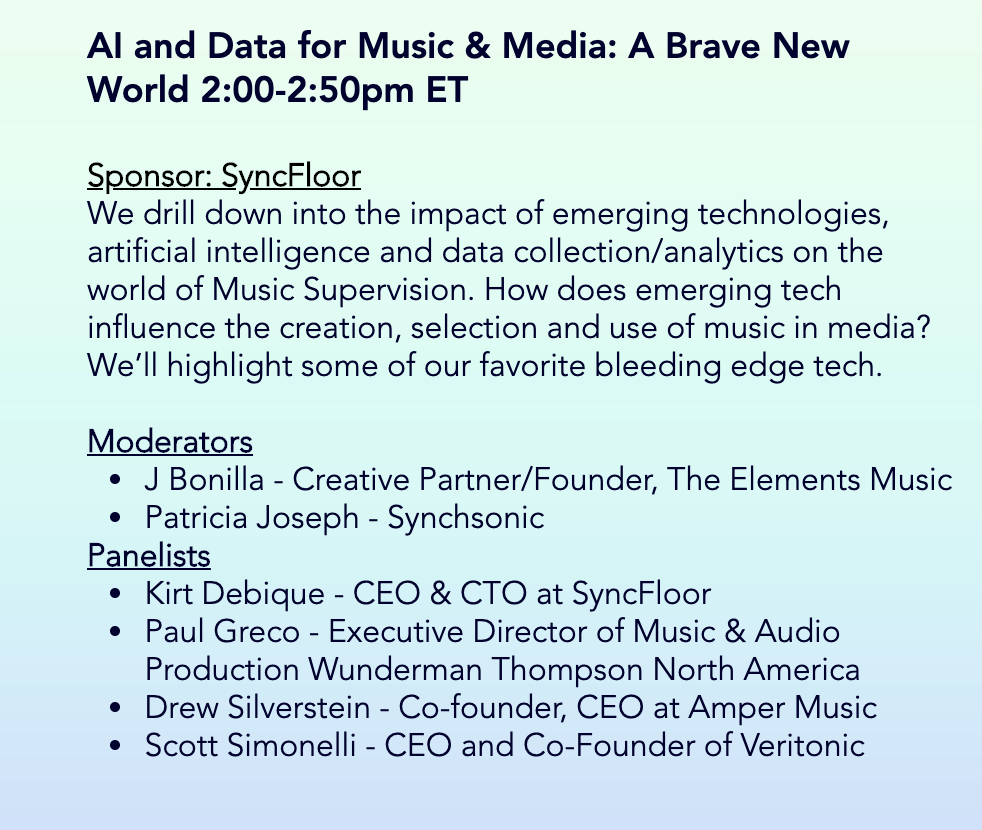

Like most folks who make their living finding or creating music, I’m not an expert on Artificial Intelligence or Machine Learning. But I’ve been watching, and if there’s a way to make friends with the robots, I’m into it. So, setting aside the much broader topic of whether the robots are eventually coming for the heads of all of us in the creative industries, I’ll share my practical take on the current state of AI music creation, and how close the robots may actually be.

The big, sensational question here is whether the algorithms alone will ever be “better than humans” at creating music. Is there a looming apocalypse for flesh-and-blood music creators? A decent starting point is an honest look at the quality of AI-generated music right now. A handful of AI music creation companies like the aforementioned Amper and Jukedeck have made serious strides in quality over the past few years, and there are several other relevant players in the space that seem to be marching the tech forward. I don’t think even they would argue that they’re anywhere close to “better than human” yet, but they’re certainly light years beyond what computing pioneer Alan Turing achieved in 1951, when he generated a few simple melodies with a computer named “Mark II” that was the size of a small room. I also suspect that these companies would be quick to assure us that driving humans out of music isn’t in the business plan.

The big, sensational question here is whether the algorithms alone will ever be “better than humans” at creating music. Is there a looming apocalypse for flesh-and-blood music creators? A decent starting point is an honest look at the quality of AI-generated music right now. A handful of AI music creation companies like the aforementioned Amper and Jukedeck have made serious strides in quality over the past few years, and there are several other relevant players in the space that seem to be marching the tech forward. I don’t think even they would argue that they’re anywhere close to “better than human” yet, but they’re certainly light years beyond what computing pioneer Alan Turing achieved in 1951, when he generated a few simple melodies with a computer named “Mark II” that was the size of a small room. I also suspect that these companies would be quick to assure us that driving humans out of music isn’t in the business plan.Truth is, I’ve heard some “AI-generated music” that’s made me sit up a little straighter in my chair and really take notice. After a deeper dive, I’ve learned that most of the AI-generated music out there that might send a shiver down the spine of a human composer, was likely created with the help of a human composer, who would have edited or embellished the piece to make it more palatable. In a sense, that speaks to the degree of difficulty at play here. It could very well be more challenging to create an AI algorithm that could generate a trumpet solo with as much humanity and nuance as Miles Davis, than to create one capable of beating an expert F-16 fighter pilot in a dogfight or besting a team of top attorneys at interpreting contracts. The two latter feats have already been achieved by AI, but if Miles was still with us, I promise he wouldn’t yet be fussed about losing his gig to an algorithm.

One factor in why the music creation robots are encroaching fairly slowly is that the “music industry” is relatively small in terms of dollars-and-cents. The global music industry is worth about $50 billion annually, versus, say, the global healthcare industry which rings in at around $10 trillion annually. So, though the tech giants of Silicon Valley and Beijing have taken some interest in AI for the arts and entertainment, they’ll likely keep funneling most of their AI development resources into industries that may ultimately offer colossal returns on investment. We’re probably not one of them.

But just because it may be smaller, more modestly funded entities driving much of the innovation around AI for music and audio, doesn’t mean it’s not making leaps. One example is how deepfake voice technology, largely driven by “basement programmers”, is opening up new possibilities in music creation. Though still a bit rough around the edges, there are existing algorithms that would allow me to get a “virtual” Billie Eilish or Jay-Z or Frank Sinatra to sing or rap on my next music project. I suspect that type of AI tool could be widely available within a few years, once the quality levels-up a couple notches and some sticky issues around copyright and ownership get ironed out.

If there’s an alarm to sound for human music creators at this point, it’s that AI-generated music could soon start making a real dent in some corners of the music-for-media business; particularly in the lower-budget “production music library” sector. This is the type of music we’re used to hearing as underscore on YouTube videos, social media clips, and even in reality TV shows, to name just a few examples. I think it’s very likely we could see major tech and entertainment firms buying AI music companies in order to cheaply and quickly create royalty-free underscore for large swaths of content. In what may be an example of this approach, TikTok parent ByteDance recently purchased Jukedeck.

But there’s a serious problem facing AI music creation companies as they hone their platforms and strive to create material that’s on par with the music we all know and love: some styles of music just feel fundamentally human. And I believe AI will never fully solve that problem. Instead, it’ll go around it. It’ll redefine it. As AI-generated music continues to develop and gain credibility over time, it will actually change our taste in music. It’ll create new instruments. The AI version of a Miles Davis trumpet solo will ultimately manifest itself in some new form that’s more readily attainable for the tech. And by the time we get there, whatever that music ends up sounding and feeling like, you and I probably won’t “get it” anyway – we’ll likely offer some version of “back in my day” to explain why our stuff was better - but I bet our grandkids will love it.

So, even though Drake and Ed Sheeran probably aren’t losing sleep right now about the rise of AI music creation, I do think it will eventually create seismic shifts throughout every sector of recorded music, though it’ll likely take much longer to prompt a new “AI Music” category at the Grammys than it will to displace composers of library production music.

The tendency in conversations around AI and music/audio is to land on the comforting assumption that we’ll be able to clasp hands with the robots and skip off into the sunset – the machines firmly understanding their role as primarily our “tools”. But before we get overconfident in our ability to maintain any long-term supremacy in music and audio, let’s consider one more case: Researchers at Aston University in Birmingham England recently developed an AI-powered algorithm that can diagnose Parkinson’s Disease using a simple recorded voice sample. The patient simply says (sings) “ahhhhh”, and the algorithm can diagnose the disease with nearly 99% accuracy. There had previously been no reliable single test for Parkinson’s diagnosis. With that context as a backdrop, my optimistic little notion that no AI could ever hear the nuances between guitar tones or snare samples as well as I can seems pretty naïve.

Even with such amazing advances being made in AI, the reality on the ground for me and creative companies like mine is that there’s still some safety in complexity. Not in technical complexity (that’s where the robots thrive), but social complexity. Even if AI-generated music reached parity with human-created music tomorrow, it wouldn’t account for the fact that the types of projects we work on require not just great music, but lots of nuanced human conversation, coordination, and trust. AI doesn’t feel like an existential threat to that in the short-term. As music creators, the more transactional our relationship is with our clients or the end consumer, the more vulnerable we’ll be to the algorithms.

That said, I’m not sure even social complexity can save any of us from the robots in the long run. But for now, let’s enjoy our moment.